Save the prompts, save the stack

You built the stack. Now build the eval. Gemma 4 26B vs Qwen 3 Coder 30B, DIY.

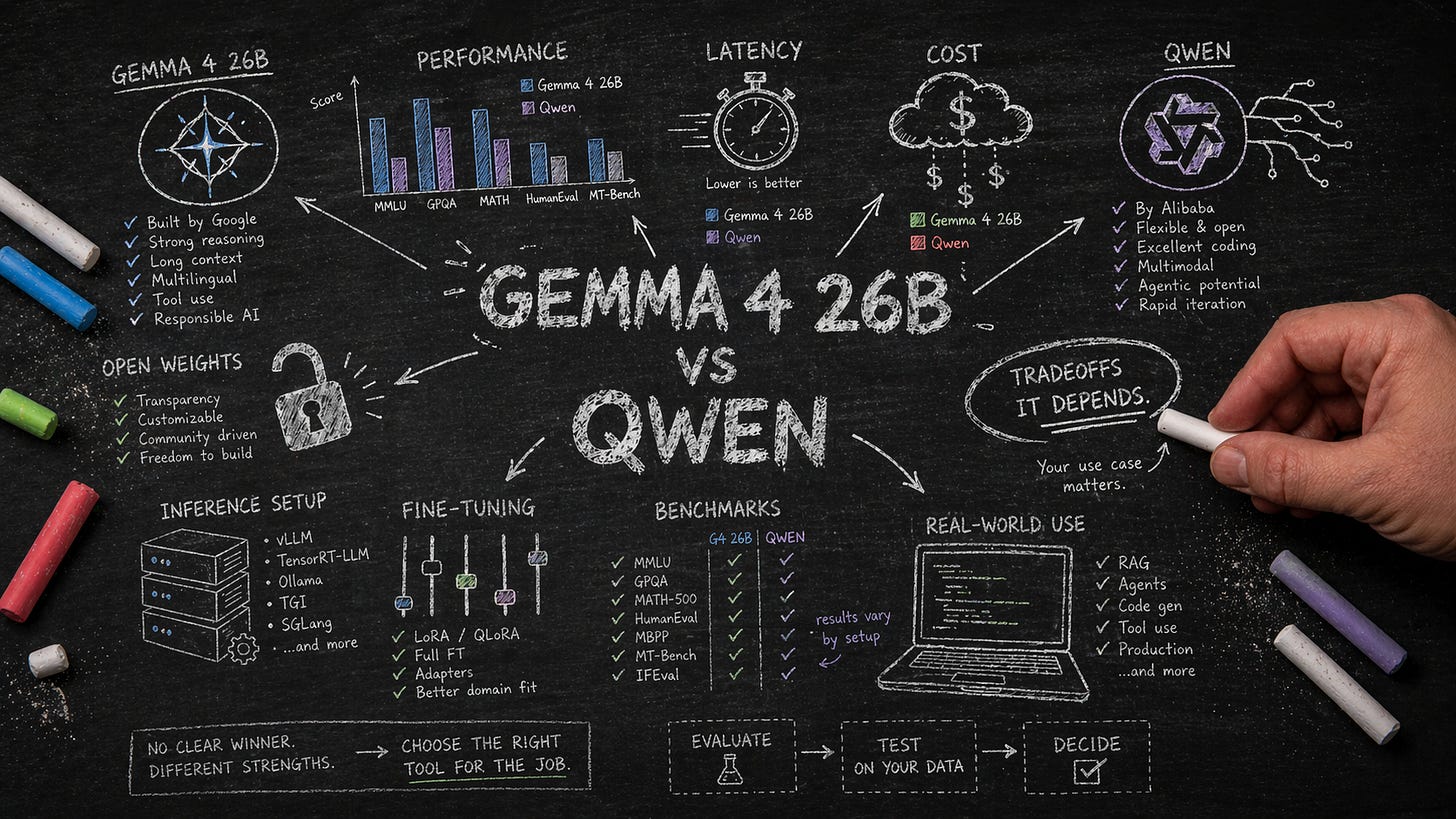

Three weeks ago, Gemma 4 26B A4B dropped. Google called it their most intelligent open model to date. The benchmark roundups are out: One put the larger 31B sibling at Master level on Codeforces, a tier most rated competitors never reach.

If you’ve been watching any of this, you know the feeling. Every new model announcement brings a flicker of the same question: Am I behind? Is this one better for what I do? This week is about where that feeling comes from — and how to put yourself on the other side of it.

Here’s what’s happening under the hood. OpenAI, Anthropic, Google — the labs running the hosted models you’d otherwise pay for — evaluate every release candidate against rubrics they own, on data they’ve collected. Why shouldn’t you?

This used to be optional. When models changed twice a year and everyone was running the same three, you could coast on vibes and leaderboards. That window is closed. Open-weight releases are accelerating — per the Epoch AI Capabilities Index, open models now trail state-of-the-art closed-source by about three months on average. The swap decision is now monthly, sometimes weekly.

And that’s the easy version. The world we’re building toward is smaller, more specialized models — one for your codebase, one for your data, one for the shape of your work — not a single hosted monolith doing everything poorly for everyone. Ownership at that scale isn’t just about running the weights. It’s about knowing which ones are worth running. Without a standing eval, you can’t tell. You’re either upgrading on faith, not upgrading at all, or — worse — running a fleet of models you can’t compare. All three are expensive.

Evaluation is how owners tell the difference. Renters don’t need it; the platform decides for them. Owners do.

If you followed along with the last DO version of the newsletter, you’ve got a local coding model running on your own machine — llama-server, Qwen3 Coder 30B, OpenCode wired in. A full AI coding setup on hardware you own. You own the runtime now. But evaluation — the step that tells you whether a new model is actually better for you — is still missing. Closing that gap is the point of this piece.

On a hosted API, you pick from a menu: capability, speed, cost. Locally, the selection problem gets wider: weights, runtime, quantization, prompt format, harness — the stack of choices a hosted API makes for you. And the evaluation step the platform quietly did on top? That just landed on your desk, too. Every release is a live swap decision — just like Gemma dropping against Qwen.

What you need is a way to save the prompts you’re already writing — the real work, the stuff you kept — so a new model can be tested against them. This is one of the reasons I built Morph: It versions your coding agent sessions the way Git versions your code: every prompt, tool call, and file edit is captured as an immutable trace. Those traces are what you evaluate a new model against. Read on to learn more about why a separate tool is needed — and how to use it.

The scorecards won’t save you

Your first instinct is probably to open the scorecards. There’s signal there. But there isn’t an answer.

Google’s Gemma 4 card puts the 26B at 77.1% on LiveCodeBench v6 — fresh competitive-coding problems designed to minimize training contamination. (For context: Gemma 3’s best score on the same benchmark was around 29%. Alibaba’s Qwen 3.5-35B-A3B card reports a Codeforces figure plus a SWE-bench Verified score, where the model has to land a passing patch on a real GitHub issue.) On paper, each card makes its own model look ahead on at least one row. Every row looks comparable. None of them actually are.

(Quick decode: “A4B” and “A3B” are active parameters — the portion doing work per token — versus the 26B and 35B totals on disk. Smaller active, faster inference.)

Here are three things worth noticing every time you read a model card:

What’s missing. Gemma’s card has no SWE-bench verified row. Qwen’s does: 69.2. You can quote Qwen’s number, but you can’t compare it to Gemma’s — because Gemma didn’t post one. No row, no number. A public head-to-head on that benchmark doesn’t exist yet.

The methodology footnote. Qwen’s Codeforces result is footnoted “evaluated on our own query set” — they wrote the problems and scored themselves on them. Google’s Codeforces figure has no such footnote. Two numbers under the same header, problem sets we can’t confirm match. Not a comparison. A naming coincidence.

Arena scores measure preference, not capability. On Arena AI’s open-source leaderboard (April 19): Gemma 4 26B A4B at 1439 ± 8, Qwen 3.5-35B-A3B at 1396 ± 5. Pairwise human votes on prompts from whoever showed up — a vibes test at scale. Useful for chat quality; not an answer to whether a model will fix your broken tests.

The structural problem, per Kapoor and Narayanan’s newsletter last week, is whatever is precise enough to benchmark is precise enough to optimize for. And even if the comparisons were clean, they still wouldn’t be your apples to their oranges — which is the asymmetry Morph is built to close.

Wait — you’re using an AI to grade an AI?

Yes. The technique is called LLM-as-a-judge, and it’s now standard practice. The canonical paper is Zheng et al., “Judging LLM-as-a-Judge with MT-Bench and Chatbot Arena.” Frontier judge models agree with human evaluators at over 80%, roughly the rate humans agree with each other. The paper also names the known failure modes: position bias (favors the first answer it sees), verbosity bias (rewards longer answers), and self-enhancement bias (rates its own kin more kindly). Keep those in mind.

Frontier grades, open model runs. Call it distillation of judgment: the closed model’s evaluation sense compressed into a cheap scoring pass on your own work.

Which leaves one thing to sort out: What does the judge actually grade? Promptfoo needs prompts. The ones you’ve been writing all week. And unless you were already saving them, they’re gone.

Git is at the wrong level

Git is a tool for tracking changes. Every version of every file, hashed by content, snapshotted at every commit. Over time, those snapshots pile up into a complete, searchable history of every state the project has ever been in. Git is, at its core, a time machine for bytes.

That design was right for code written by humans. It may be the wrong design for code written with an agent. The file tree is now the output of a probabilistic process, and the interesting part isn’t the bytes. It’s the prompt that produced them. It’s the tool calls the agent made. It’s the three wrong turns before the agent settled on the one you kept. Git stores files, not runs. You commit, and the shell history scrolls past the prompt that got you there.

This is the problem I built Morph for. I’d commit a fix, look at it a week later, and have no idea what I’d asked the agent to land on it. Morph records at the right level: Every agent session becomes an immutable run with a full trace. It sits next to Git in .morph/ and doesn’t interfere. You keep committing with Git. Morph keeps the receipts.

The eval use case fell out of that. Once the prompts are saved, you can replay them against any model. Which is what Tap does. It’s another tool I built, one that reads Morph’s traces and turns them into a runnable eval.

Turn a week of work into a test suite

tap extract \

--provider ollama:qwen3-coder:30b \

--judge-provider openai \

--judge-model gpt-5.4 \

--output eval.yaml--provider names the model under test — the same Qwen3 Coder 30B you’ve been running all week. --judge-provider and --judge-model point at the frontier as grader.

Tap classifies each turn as CREATE_CODE, MODIFY_CODE, or FIX_BUG and generates a rubric per turn. More on what makes a rubric actually work in a minute.

Run it…

export OPENAI_API_KEY=...

promptfoo eval -c eval.yamlThe first run takes a while. Local model doing real inference, frontier grading every output. Grab a coffee.

The first time I tried it, I came back and the pass rate was zero. Not “low.” Not “mostly passing.” Zero out of 17 — on code that had shipped, was in main, and I’d been using all week.

Either Qwen was dramatically worse on a second pass or the eval was wrong. I opened the first failure.

The bug was an early return before a loop that should have run. Qwen deleted the right one. But the function also had a second return — a valid guard clause. And the judge, reading a rubric that said only “fix the bug and restore correct behavior,” saw a return, shrugged, and marked it wrong. Technically correct. Operationally useless.

This is a known failure mode. Shen et al. (2026) show that naive rubrics can actually degrade an LLM judge’s accuracy below a no-rubric baseline — a 13-point drop on JudgeBench. The fix is decomposition: rubrics that enumerate the specific constructs the judge should and shouldn’t see, so the verdict hinges on something concrete rather than a general notion of correctness.

So…. I had Tap do rubric generation with an LLM, too. For each classified turn, it prompts a frontier model to produce a code-aware rubric tied to the actual change: which constructs must be preserved, which must change, what observable behavior constitutes a pass. One model writes the rubric, a different model judges against it.

I reran it. Qwen came back at 100%, which makes sense: It’s the model that wrote the code. Graded against a rubric built for the change it made, it should pass.

The rubric was wrong, not the model. Generic rubrics are how you lie to yourself with a benchmark.

Now, the real test

One line in the YAML:

providers:

- ollama:qwen3-coder:30b

- ollama:gemma4:26bRerun. Same eval set, same rubrics, two models side by side.

An honest comparison

Qwen 3 Coder 30B and Gemma 4 26B tied on my traces. Not “within a point.” Tied. Same pass rate, same failure modes.

It deserves a caveat. My traces are toy work — refactors, small agents, weekend experiments. The kind of prompts where two competent coding models should converge. There’s not much room between them on a 200-line utility. That’s the method telling you the easy cases really are close. The difference shows up as the work gets harder — more files, longer horizon, real tool-calling chains, code that has to integrate with something I didn’t write. That’s where models that look the same on HumanEval stop looking the same in practice.

It’s an argument for building this pipeline now, so it’s there when you need it. Once Morph is recording and Tap is extracting, rerunning an eval is a one-line YAML edit. A new model drops — add it to the providers list, rerun, read the diff. What was a project the first time becomes a background check the second. The ten minutes it takes you the morning a new Qwen or Gemma drops is the cost of never being surprised by them again.

Generic benchmarks are a tiebreaker, not a decision. The set that matters is the one you’re generating every day. Morph saves it. Tap extracts it. Promptfoo runs it. The frontier grades it. You decide.

One ask. If you're coding with agents, install Morph and let it record for a week. It's open source, it sits next to Git, it doesn't care what agent you run. I've been using it on my own work, but "works on my machine" is the weakest eval there is. The pipeline in this piece only matters if the trace layer is solid across codebases that aren't mine. Break it on yours. File issues. Tell me what's missing. The whole thing gets better the more people it's recording for.

Your evals are already on your laptop. Go look at them — and tell me what you find. Drop what you ran, what you saw, and where the eval surprised you in the comments. I’ll read every one and fold the best into the next issue.